April 2026: AI Liability

Jury verdicts, proposed changes to the Colorado AI Act, new Oregon and Washington chatbot laws, and FTC enforcement actions bring AI liability questions front and center

Happy Spring!

It was great to see so many of you at IAPP. Between our privacy executives' luncheon with Mike Macko from CalPrivacy and countless conversations in the Marriott lobby, it was the kind of busy and chaotic week that reminds us why we do this work. On the FPF Legislation team, we're in the weeds daily—debating textual nuances, tracking incremental policy shifts, parsing regulatory language—and then we send it out into the world, hoping it lands. So when you tell us how our work helps you or your clients navigate the landscape, it means everything. Thank you for those conversations, for using what we produce, and for inspiring us with new ideas and challenges.

The FPF U.S. Legislation Team (left to right): Daniel Hales, Jordan Wrigley, Tatiana Rice, Justine Gluck, Jordan Francis.

With that said, let’s jump into this month’s developments.

Between social media jury verdicts in New Mexico and California, proposed revisions to developer-deployer liability in Colorado's AI Act, new chatbot laws with PRAs in Oregon and Washington, and FTC enforcement on data sharing for AI training, April made AI liability impossible to ignore. Don’t forget to check out FPF’s newest resources at the bottom!

1. Juries in New Mexico and California Find Social Media Companies Liable Under Consumer Protection and Product Liability Laws

Back in January, we predicted 2026 would be the year policy meets the courts. March proved us right—and then some.

In New Mexico (D-101-CV-2023-02838), a jury found Meta violated the state’s Unfair Practices Act (UPA) for misleading platform safety to consumers and endangering children through platform design choices. Specifically, the jury found that Meta failed to restrict the distribution of CSAM and obtain parental consent for child data collection, purposefully designed its platform to be “addictive,” and claimed that the platform was safe for children and teens despite known safety risks. Notably, the AG’s public nuisance claim is scheduled for bench trial starting May 4, where the AG seeks additional injunctive relief that would require “effective age verification, removing predators from the platform, and protecting minors from encrypted communications that shield bad actors.” The following day, a California jury (JCCP5255) found Meta and YouTube liable under products liability and negligence theories for platform design that caused severe mental distress. Like the New Mexico case, the jury considered whether features like infinite scroll and algorithmic recommendations contributed to mental health issues—and ruled they did.

Though both Meta and Google have stated they intend to appeal the decisions, there are immediate implications:

Compliance Effects: Companies, both large and small, are now on heightened notice for the design of their digital products under consumer protection and product liability frameworks. At a high level, the litigation risk alone may drive compliance efforts that state youth safety laws have attempted to achieve for years: implementing robust parental controls and consent mechanisms for minors, reassessing engagement-maximizing features (infinite scroll, autoplay, algorithmic recommendations) against risk of "addictive" design claims, ensuring marketing and safety representations are substantiated and not misleading given known risks, and developing frameworks to balance interactive design against features that encourage overuse or harmful dependence. For companies building AI products—particularly chatbots—these verdicts may signal similar scrutiny ahead.

The Fate of Section 230: Section 230 of the Communications Decency Act shields platforms from liability for user-generated content they host or moderate, as the Supreme Court confirmed in Gonzalez v. Google (2023). The policy rationale behind Section 230 is that platforms function as conduits for user speech, not as publishers responsible for every post, whereas imposing liability for third-party content could chill expression and prove unworkable. However, both verdicts here bypassed 230 by targeting product design—infinite scroll, autoplay, engagement algorithms—as defective under consumer protection and tort law, not as content moderation failures. The policy distinction may arguably hold: these features aren't speech but product choices shaping user interaction, and the harms are foreseeable. But the line between product liability and content immunity significantly blurs with chatbots, when the product, arguably, is speech (e.g. the bot's outputs - discussed below).

2. Colorado Work Group Proposes Revisions to AI Act

On March 17, the Colorado AI Policy Work Group released its proposed revisions to the Colorado AI Act (CAIA). The core proposed revisions include:

Revised Scope: The new text moves from “high-risk AI” to “covered ADMT.” “Covered ADMT” is defined under the proposed revisions as any technology that processes personal information (new factor) that “materially influences” a consequential decision, meaning it is a “non-de minimis factor” that meaningfully alters the outcome of the decision. Currently, “high-risk AI” under the CAIA includes any AI system that is a substantial factor in a consequential decision, meaning it is capable of altering the outcome of the decision. However, the proposed revisions also appear to expand the definition of “consequential decision” to include any decision relating to education, employment, housing, etc., rather than the current CAIA’s text that the decision has a legal or similarly significant effect on the provision or denial of, or cost or terms of, those enumerated areas (common to data privacy laws).

Shift Away from Algorithmic Discrimination: The proposed revisions remove the algorithmic discrimination provisions of the CAIA, including the duties of care, developer requirements to disclose bias risks to deployers, and deployer requirements to conduct an impact assessment for risk of algorithmic discrimination, amongst others. The only mention of risk is the requirement for developers to disclose “known risks” to deployers and “risk mitigations” when there is a material ADMT update (though risk mitigation measures are not required by the revised text nor required upon initial disclosure to the deployer).

Removal of Substantive Governance Provisions: The proposed revisions remove most core components of meaningful AI governance, including requirements for developers to disclose performance and risk mitigation measures, and requirements for deployers to maintain a risk management program, impact assessments, and human oversight and accountability mechanisms. So what remains in the revised text? Four key components: (1) Developer documentation to deployers regarding ADMT use, training data information, and limitations; (2) Consumer transparency regarding when ADMT is used for a consequential decision; (3) Deployer disclosures to consumers when an adverse outcome results from an ADMT and instructions on how to request additional information or request human review (to be clear, the language does not appear to explicitly grant consumers a right to appeal that a business must adhere or respond to, but only that the business must inform the consumer of how to potentially request “if available”); and (4) Liability provisions regarding enforcement, allocation of responsibility between developers and deployers, and exemptions.

Role-Specific Liability Limitations: The proposed revisions also aim to clarify the allocation of responsibility between developers and deployers under existing anti-discrimination law (ADL). It states that both developers and deployers may be held liable for violation of ADL arising from a covered ADMT with some caveats: (1) Developers may only be liable when the deployer used the ADMT as intended and documented by the developer; (2) Parties may not enter into indemnification agreements (except in instances where the deployer acts outside the scope of the intended uses or documentation); and (3) ADMT is not a defense under ADL or consumer protection laws.

On policy, these revisions raise questions regarding the purpose of the law. When CAIA was enacted in 2024, the legislation aimed to create clarification around how to develop and use AI in a manner that complied with existing ADL and provide meaningful transparency to affected individuals. Though less than perfect, it was considered at the time a win for industry and civil society alike. Industry wanted clarity and predictability as civil rights lawsuits regarding discrimination resulting from AI tools were starting to arise (see, e.g. Mobley v. Workday), and proactive requirements provided compliance teams with clear rules of the road to mitigate liability risk. Civil society wanted stronger accountability for companies to proactively conduct diligence before releasing their tools, and potentially exacerbating existing inequalities; or at a minimum, to have some form of transparency, recourse, or documentation to support enforcement of existing ADL (see, e.g. K.W. v Armstrong as an example of judicial difficulties of assessing AI decision-making processes at the time). To that end, these proposed revisions do little to effectuate the underlying goal beyond transparency. However, they may address political issues that arose during the years of heated negotiations between local VCs, industry, and consumer advocates.

3. Oregon and Washington Enact Chatbot Laws

Don’t be fooled by the label of “chatbot legislation.” Oregon’s SB 1546 (signed March 31) and Washington’s HB 2225 (signed March 24) apply to any AI systems that are designed to simulate or sustain a relationship, which arguably can be most forms of consumer-facing LLMs with personalization features. While both laws exempt tools used only for customer service, internal business, and technical assistance, entities will likely need to evaluate their applicability on a use case by use case basis since most general-purpose LLM tools hardly carry only one purpose and often include these anthropomorphic features. These nitpicky ambiguities may not always be relevant for reasonable enforcers, but these laws’ PRAs may allow some creative plaintiff attorneys to push the boundaries.

Requirements: Modeled after California SB 243 (enacted 2025, effective Jan 2026), these laws require disclosures to users that they are interacting with an AI system and safety protocols to detect and prevent self-harm and suicidal ideation. For minor users, operators must institute reasonable measures to prevent the chatbot from producing or suggesting sexually explicit conduct. Oregon’s law further mandates operators to implement reasonable measures to prevent chatbots from claiming sentience, simulating emotional dependence, or simulating romantic interest or a relationship. Oregon and Washington also go further than California by requiring operators to prevent engagement optimization for minors.

PRAs: Like California SB 243, both laws carry PRAs with an injury requirement (WA is a “backdoor PRA” provided through the state’s Consumer Protection Act). Because the injury requirement likely limits the floodgates of litigation under these laws, it’s more likely that these laws create additional grounds of liability for individuals who already would have tort law claims against operators than bring about standalone litigation. It will be interesting to see whether litigants use privacy violations—under the Oregon Consumer Privacy Act or COPPA—as predicate injury for these claims, similar to how the FTC successfully alleged privacy violations as injury in Kochava.

Ripe for First Amendment Challenge? Requirements to prevent engagement optimization and restrict sexually explicit content may face First Amendment challenges. These provisions may be more narrowly tailored than broader "harmful content" laws—targeting specific chatbot harms rather than sweeping social media or app-level restrictions—but could still be overbroad if minors use LLMs like search engines. The practical problem is enforcement: "sexually explicit content" and "suggestive dialogue" are subjective categories vulnerable to politicized interpretation. Given documented efforts to censor LGBTQ+ content and restrict minors' LGBTQ+ exposure, politicized enforcement may outweigh potential harm reduction, particularly when alternative regulatory approaches exist.

Folks interested in the chatbot developments should also keep an eye on Georgia’s SB 540 (passed legislature March 27, awaiting enrollment), which follows a similar model to the OR and WA, but also blends other child online safety requirements, such as parental controls and age assurance.

4. Federal AI Proposals Show Continued Negotiations on Youth Issues and Chatbots

On March 20, the White House released its “National Policy Framework for Artificial Intelligence,” outlining seven key policy strategies in developing a “light touch” unified federal AI approach. The four-page document calls upon Congress to create a legislative proposal that, amongst other things: requires AI platforms to implement parental controls, limits on harmful content, and age assurance; create regulatory sandboxes and sector-specific regulations; and, of course, preempt state AI laws (except as it relates to enumerated areas such as child safety, general consumer protection, and government use of AI).

The framework came shortly after Sen. Blackburn (R-TN) released her own 270-page omnibus AI proposal, titled the “TRUMP AMERICAN AI Act.” But notably, despite the namesake, the bill sets forth an aggressive AI regulatory structure and does not (yet) include any provisions preempting state AI laws. Based on several bills already introduced, the discussion draft bans companion chatbots for minors and requires age verification (GUARD Act), creates a CA SB 53/NY RAISE Act-style frontier model regulation (AI Risk Evaluation Act), creates a new federal cause of action for products liability against AI developers (AI LEAD Act), and requires third-party audits for high-risk AI systems to prevent discrimination based on political affiliation [insert snarky remark about the irony].

These developments highlight two key points:

Minors’ Chatbots as the Negotiation Point on AI: Chatbot regulation for minors has fractured Republican AI policy into three negotiating blocs. Historically, the White House and AI accelerationists opposed state-level chatbot regulation, blocking leading proposals in red states like Utah and Florida. Congressional Republicans, however, have signaled that kids’ online safety remains a key part of their agenda, with both Sen. Blackburn’s proposal and the House E&C youth package (passed out of committee on March 5) regulating chatbots (SAFE BOTS Act). This Congressional positioning likely explains why the White House AI Framework now prioritizes regulation of children's AI platforms despite previous opposition. A third bloc complicates potential compromise and consensus: traditional conservative libertarians favor free markets and free speech, and oppose chatbot restrictions—particularly those limiting access to speech.

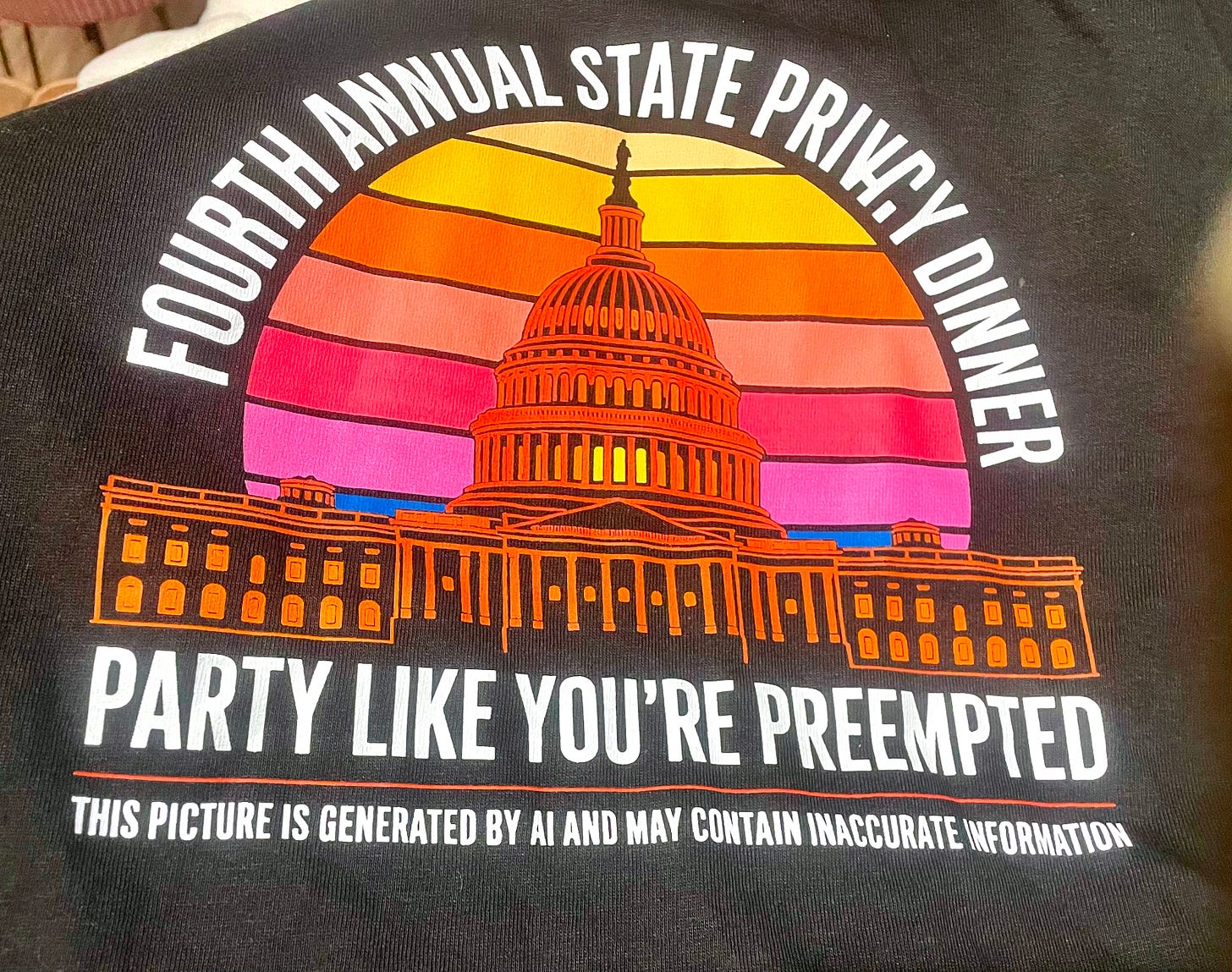

Where does privacy legislation fit? As most folks await AI developments on the Hill, we privacy nerds continue to await the discussion draft of a long-rumored federal privacy bill. Privacy legislation may lack AI's current cachet, but it directly regulates AI through common provisions on automated processing and automated decision-making technology (ADMT) that produce legal or similarly significant effects, as well as limitations on how entities may collect and use personal data for AI training purposes. Given these intersections, Rep. Obernolte (R-CA) is reportedly working to align any privacy bill with emerging federal AI policy. Once a discussion draft emerges, privacy practitioners will party like we're preempted—celebrating a uniform federal framework unlikely to succeed in an election year, but toasting to the fantasy anyway.

Photo credit to Dave Stauss (Troutman Pepper Locke) and Cobun Zweifel-Keegan (IAPP)

5. FTC Finalizes Settlement Regarding Data Shared with Third-Party for AI Training

On March 30, the FTC announced a settlement with dating app Humor Rainbow/OkCupid over allegations the company shared user photos, as well as demographic and location data, with a third party for AI training—without disclosing the transfer in its privacy policy or obtaining user consent. The enforcement action, one of Chair Ferguson's first major privacy cases, may signal that the Commission views undisclosed data sharing for AI training under Section 5 of the FTC Act.

Though the complaint may not capture it, the background of the case is seriously intriguing, involving a New York Times investigation and a major cover-up. For the full story, read Mary Bennett and Rob Robinson’s analysis on JD Supra here. But the TLDR is: after Clarifai's CEO requested user photos for AI training, an OkCupid co-founder transmitted three million photos, alongside demographic and location data, via personal email—bypassing corporate processes and data-sharing protocols, and contrary to the privacy policy's disclosures. When The New York Times investigated, OkCupid told users that suggestions it had shared data with Clarifai were "false."

The Deceptive Conduct: Chair Ferguson has vocally opposed using Section 5 to regulate AI directly, so this complaint's framing is telling. Reasonable minds could differ on what constituted the core deception: was it sharing personal data for AI training without notice or consent, or was it the company's direct lie to users denying the allegations—or both? The complaint itself doesn't clearly disambiguate. Notably, it states that "The third party was not a party with whom Humor Rainbow was permitted to share users' personal information and Humor Rainbow never gave users an opportunity to opt out of having their personal information shared." Such language that could imply Section 5 requires entities to provide opt-out rights for data sharing, though the Commission doesn't make that argument explicitly either.

BIPA Did it First: Interestingly enough, the same data transfer also triggered litigation under Illinois' Biometric Information Privacy Act (BIPA) years ago. In Stein v. Clarifai, Inc., an Illinois OkCupid user filed a class action alleging Clarifai obtained his facial geometry templates by scanning user photos without consent. The court ultimately dismissed on jurisdictional grounds, but the intersection illustrates that AI training on consumer data implicates both consumer protection frameworks and privacy statutes simultaneously. Privacy violations increasingly are AI issues: undisclosed data collection, inadequate consent mechanisms, and analysis of human features and behaviors all fuel model development. It is no longer the case (if it ever was) for entities to treat AI governance and privacy compliance as separate tracks.

Hot From the Presses:

2026 Chatbot Legislation Tracker: Highlights chatbot-related legislation in 2026, including bills that have passed at least one legislative chamber. This tracker reflects a subset of FPF’s broader legislative tracking work.

Mapping the Chatbot Landscape: Details definitional patterns and common regulatory provisions appearing across chatbot bills.

Privacy Protections Coming Sooner Rather Than Later to the Sooner State: Covering the 20th state to enact a comprehensive consumer privacy law.

Incentives or Obligations? The U.S. Regulatory Approach to Voluntary AI Governance Standards: Exploring how voluntary AI frameworks are playing a role in AI legislation and litigation.

Navigating Autonomy and Privacy in Emerging AgeTech: Discussing fundamental questions about age tech used to support older adults as explored in a recent FPF roundtable.

Red Lines Under the EU AI Act: My global colleagues continue to whip out important new analysis about each of the prohibited practices under the EU AI Act, including biometric recognition, emotion recognition, and image scraping.

That’s all for now, thank you for reading. See you in May!

The Algorithmic Update is a monthly newsletter highlighting key legislative, regulatory, and legal developments in privacy, AI, and tech policy. FPF members receive weekly legislative updates with deeper analysis and tracking, as well as member-exclusive resources—learn more here or contact membership@fpf.org.

Tatiana Rice is the Senior Director of Legislation at the Future of Privacy Forum (FPF).

"after Clarifai's CEO requested user photos for AI training, an OkCupid co-founder transmitted three million photos, alongside demographic and location data, via personal email—bypassing corporate processes and data-sharing protocols, and contrary to the privacy policy's disclosures."

!!!!