May 2026: Springtime Negotiations

Congress debates a federal privacy framework, Colorado revises its AI Act under legal pressure, and Connecticut finally advances its AI package—showcasing key negotiations shaping technology policy

Happy May!

Spring has arrived—bringing cherry blossoms and the season of legislative negotiation. This month House Republicans released their federal privacy framework modeled on state laws, Colorado continues revising its contested AI Act amid lawsuits and federal intervention, Connecticut finally advanced its omnibus AI package after two years of attempts, and Maryland moved forward on a new data-driven law. Each represents compromise, revision, and the messy work of finding common ground on technology policy’s most contentious issues.

Because this issue covers these developments in greater depth than usual, it highlights four major developments rather than the typical five—but these four capture the month’s most significant policy movement.

Let’s dig in.

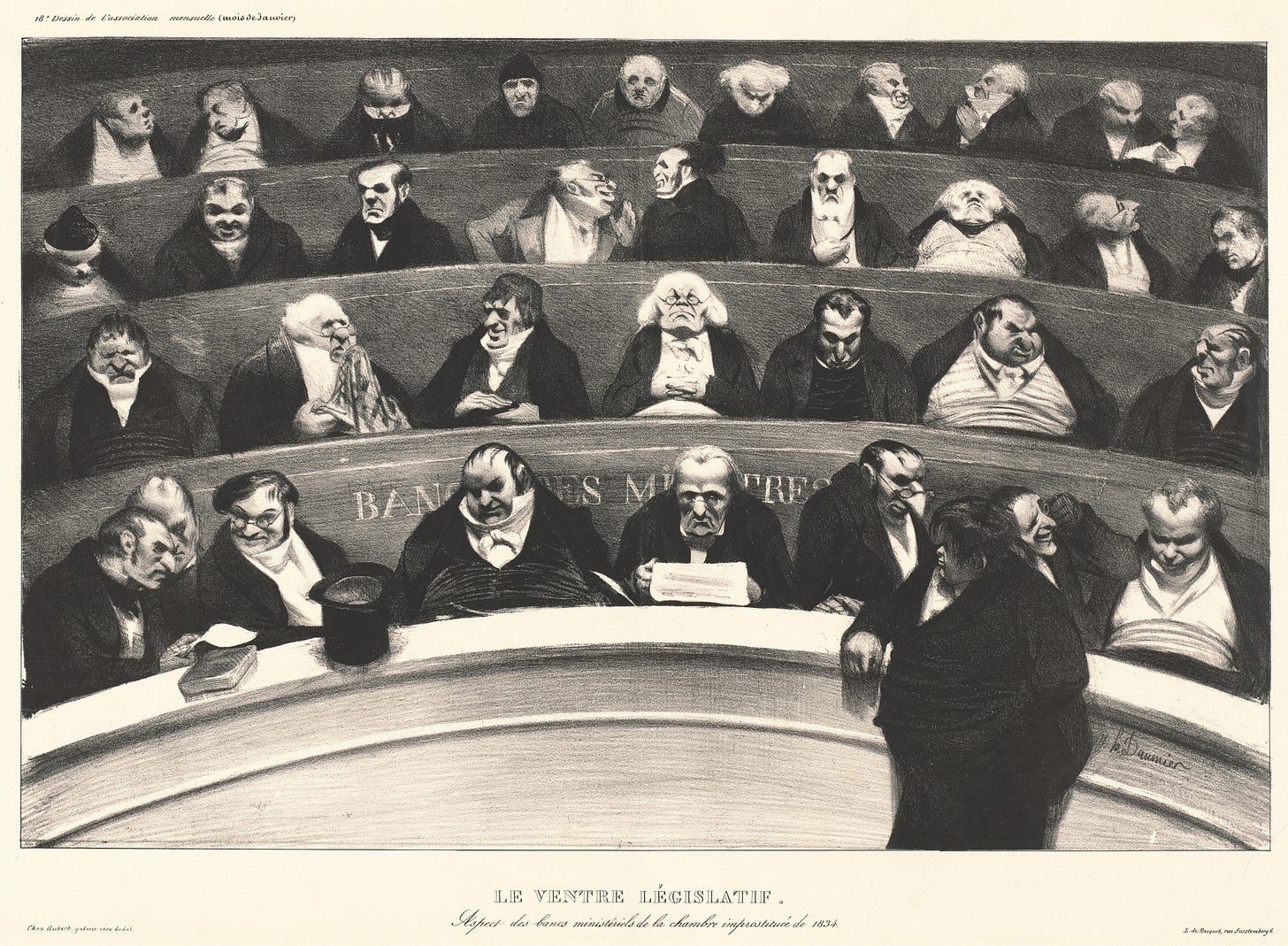

Honoré Daumier (1843), “Le Ventre Législatif (The Legislative Belly).” This object’s media is free and in the public domain. A fitting reminder that behind every negotiation are those who feast while others debate.

1. House Republicans Introduce Federal Privacy Bill Based on State Frameworks

Following months of rumors and anticipation, the House Energy & Commerce Republican data privacy working group released their federal consumer privacy bill, H.R. 8413 “Securing and Establishing Consumer Uniform Rights and Enforcement Over Data Act” (SECURE Data Act). While the bill’s content and approach continue to be debated, its greatest significance is that it represents the first federal framework explicitly modeled on state privacy laws — specifically, the "Washington Privacy Act" framework now adopted by twenty-one states. Whether or not this bill passes, it sets a precedent by utilizing a framework already tested by both red and blue states, offering a useful alternative to the fragmented approaches seen in ADPPA and APRA.

Nonetheless, characterizations of substance have varied: some characterize it as weaker than existing state frameworks, while others see it as reflecting state consensus and creating uniform rules that eliminate the "patchwork" of state privacy laws (though the widespread adoption of the WPA framework suggests the patchwork is more like variations of a template than fundamentally incompatible approaches). FPF’s analysis revealed that while the bill at large, aligned most closely with narrower red state iterations of the WPA framework, such as the laws in Kentucky, Iowa, Tennessee, Utah, and Alabama’s recently enacted law, there was more nuance than most commentators suggest (see, FPF’s full analysis here - Note: We excluded California from this comparison as a non-WPA framework, though the bill is consistently narrower and less prescriptive than the CCPA's requirements)

TLDR on How the Bill Compares to the State Landscape:

Adopts some of the narrowest approaches found in some state WPA laws (definitions around biometric data and sale, and certain consumer rights); and

Omits requirements common to many state WPA laws (data protection impact assessments, recognition of universal opt-out mechanisms);

BUT -

Includes provisions absent from the narrowest WPA state frameworks (data minimization, anti-discrimination protections); and

Introduces elements beyond typical WPA state approaches (federal data broker registry, teens’ data as sensitive data, codes of conduct).

While most key substantive observations are detailed in the FPF analysis, some important policy considerations to note:

Reactions from Industry: Notable industry trade associations have expressed explicit support, emphasizing the bill's promise to end the "patchwork" of state laws while remaining largely silent on substantive provisions. Individual industry members have been notably quiet — a possible hedge given relationships with state lawmakers and the possibility of Democrats retaking the House in November.

Reactions from Civil Society: Academics and privacy advocates have expressed strong opposition to the bill, including pointed analysis of how it places disproportionate burdens on individuals rather than establishing meaningful mechanisms to constrain industry practices, lacks strong data minimization requirements, and fails to recognize universal opt-out mechanisms — provisions that would preempt stronger protections in several states.

Likelihood of Success: The bill likely functions as an initial negotiating posture rather than a consensus product. While significant consensus-building could facilitate committee passage, 2026 is an election year, and Senate action appears unlikely. However, the bill’s intersection between data privacy and international data flows, kids’ online safety, and AI regulation means that even without passage, the SECURE Data Act will likely exert influence that will shape future privacy, safety, and AI legislation. Observers can probably expect a Subcommittee markup in the coming weeks, followed by a full Committee markup.

2. Revisions to the Colorado AI Act Continue, While Enforcement is Delayed

Drama continues to swirl around the contested Colorado AI Act (CAIA). Earlier this month, xAI filed a lawsuit seeking to block the enforcement of the law, alleging various constitutional violations, including violation of the First Amendment and Due Process Clause. A few weeks later, the U.S. DOJ intervened in the lawsuit in favor of the law being enjoined. Now, a federal judge approved a joint motion by xAI and the Colorado Attorney General to delay enforcement of the Colorado AI Act (CAIA) (SB 205) and stay xAI’s lawsuit. The joint motion states that a delay is warranted based on the possibility of the law’s revisions and the need for the AG to conduct rulemaking before enforcement.

On May 1, Senator Rodriguez (D), Senate Majority Leader and primary sponsor of the CAIA in 2024, introduced SB 189, largely incorporating the proposed revisions announced by Colorado Governor Polis’ workgroup last month.

TLDR on the Proposed Revisions:

Revised Scope: Moves from “High-Risk AI” to “Covered ADMT.” Covered ADMT is any technology that “materially influences” (defined as a non-de minimis factor that affects the outcome of) a consequential decision, which, in turn, is defined as a decision relating to a consumer’s provision of, access to, selection for, or compensation for, a covered domain (defined as, e.g. education, employment, housing, etc). Compared to the CAIA, this creates a higher threshold for AI involvement.

Shift Away from Algorithmic Discrimination: Removes the algorithmic discrimination provisions and shifts generally towards unenumerated known risks.

Pared Down Requirements: Removes most governance provisions and focuses on four key components: (1) developer documentation to deployers; (2) deployer transparency to consumers (a) when ADMT is used for a consequential decision, (b) when an adverse outcome results from an ADMT, and (c) instructions on how to exercise consumer rights; (3) consumer rights of correction (as provided under the Colorado Privacy Act (CPA)), and opportunity for human review and reconsideration when there is an adverse outcome; and (4) liability provisions.

Extends effective date to January 1, 2027

Compared to the workgroup’s version, SB 189 makes some minor but notable changes, including a clarification that certain CPA entity-level exemptions do not exempt deployers’ requirements to provide consumer rights of correction and review under the Act (meaning, e.g., although the CPA exempts employee data, employers deploying covered ADMTs must still provide employees correction rights under this Act, despite the Act's incorporation of CPA correction rights).

The Colorado legislature adjourns May 13, leaving less than two weeks for legislative negotiations. Some policy considerations regarding the revisions and the lawsuit are worth observing:

Proposed Revisions Address Some but Not All Constitutional Concerns: The proposed revisions address only some of the constitutional issues raised in xAI's complaint. Removing the discrimination provisions may resolve First Amendment concerns about compelled ideological speech and related due process claims. However, the revisions don’t seem to address xAI's second 1A theory that Grok's outputs constitute protected speech and any regulation of those outputs constitutes impermissible restrictions on speech. Nonetheless, if the lawsuit’s primary goal was political pressure and cultural messaging, these revisions may offer enough cover for plaintiffs to declare victory in their stated fight against ‘woke AI’ without ever testing the constitutional merits.

xAI’s Potential Lack of Standing: While the outcome of this lawsuit could create important precedent on whether and when AI laws violate the First Amendment, it faces threshold standing questions that a court could resolve without reaching the constitutional merits. The CAIA’s developer provisions (both in current and revised text) apply to entities that design an AI system for high-risk decisional use — employment, housing, healthcare, education, and financial services. Whether this covers general-purpose conversational AI like Grok is debatable, but both the current text and proposed revisions law also explicitly exempts interactive technologies that provide users information (”chatbots”) if subject to an acceptable use policy (Sec. 6-1-1701(9)(b))—a consideration notably absent from xAI’s complaint. If xAI falls outside the law’s scope, the court need not address the constitutional claims.

1A Challenges Against AI Laws Have Found Mixed Success: xAI's CAIA lawsuit is not the first to seek a preliminary injunction against an AI law on First Amendment grounds, nor is it xAI's first attempt at this strategy. Similar claims have failed in two prior cases: challenges to New York's Disclosure Act (algorithmic pricing) and California's AB 2013 (generative AI transparency - also brought by xAI). In each case, courts applied the more permissive compelled commercial speech standard and found the government had a sufficient interest in ensuring consumers receive adequate information for informed decision-making. In contrast, a court did issue a preliminary injunction against California’s AB 2839 (prohibiting deepfakes in elections), finding that the law was not the least restrictive means of protecting electoral integrity.

3. Connecticut AI Bill Finally Makes it to the Finish Line, Alongside Another Privacy Update

After two years of attempts, Connecticut Senator Maroney (D) has finally shepherded his omnibus AI package, SB 5, out of the legislature, and Governor Lamont has signaled support for signing it. Concurrently, Senator Maroney has also advanced amendments to the Connecticut Data Privacy Act (CTDPA) through SB 4. The twin bills tackle distinct but related issues: SB 5 imposes transparency and oversight requirements on chatbots, employment AI, social media, and generative AI systems, while SB 4 addresses data governance through amendments to the privacy law, data broker oversight, and algorithmic pricing restrictions.

TLDR on Connecticut SB 4 and 5:

SB 4 - Data Brokers, Personalized Pricing, and Privacy

Creates a data broker registry;

Requires businesses to disclose when personalized pricing is used and prohibits retailers and delivery services from engaging in surveillance pricing;

Updates the CTDPA by (a) narrowing the definition of publicly available information and providing consumers the right to delete inferences based therefrom, (b) prohibit the sale of precise geolocation data, (c) and requires businesses using facial recognition for security purposes to disclose such use; and

Creates new obligations for direct-to-consumer genetic testing companies.

SB 5 - Chatbots, AEDT, Social Media, GenAI, and more

Requires companion chatbot operators to implement safety protocols, disclosures, and minor-specific protections;

Requires entities using automated employment decision tools to disclose such use and amend the state’s anti-discrimination law to include automated decisionmaking;

Requires social media platforms to implement age verification and obtain parental consent for some enumerated features;

Requires generative AI providers to implement provenance data disclosures; and

Establishes whistleblower protections for employees of frontier developers.

Some History: In 2024, Senator Maroney (D - CT) and Del. Maldonado (D - VA) spearheaded a framework to address algorithmic discrimination in “high-risk” areas. After the Virginia bill failed to move and the Connecticut bill (SB 2) faced a threatened gubernatorial veto, similar legislation was introduced and enacted in Colorado by Senator Rodriguez as SB 205, the Colorado AI Act. The following year, both Virginia and Connecticut attempted to pass AI legislation under substantially different political conditions. A new presidential administration and increasingly vocal AI accelerationist movement opposed state-level AI regulation. Virginia's bill took a significantly narrower approach but was still vetoed by Governor Youngkin (R). Connecticut's bill, also substantially narrowed from the prior year, did not advance past the Senate with a similar threatened gubernatorial veto. However, minor AI-related amendments to Connecticut's data privacy law were enacted, and the legislature established the Connecticut AI Academy to facilitate AI workforce development.

The Land of Steady Habits: My colleague Jordan Francis jokes that “True to its namesake as the ‘Land of Steady Habits,’ Connecticut is developing the habit of amending the CTDPA.” Since the CTDPA’s enactment in 2022 (the fifth state to enact a comprehensive state privacy law), it has been amended twice, with SB 4 marking the third amendment. These amendments have largely reflected broader policy shifts in the U.S. privacy landscape, such as heightened protections for minors and health data enacted in 2023 following the Dobbs decision and increased state-level focus on children's privacy. This year's amendments addressing data brokers and precise geolocation data restrictions similarly reflect recent legislative privacy trends, reflecting concerns about federal immigration enforcement agencies purchasing personal data from commercial brokers. The facial recognition amendment, by contrast, is likely responding to a state-specific enforcement concern raised by the state Attorney General in their 2025 report.

4. Maryland Enacts Data-Driven Pricing Law

On April 28, Maryland Governor Wes Moore (D) signed HB 895, “The Protection from Predatory Pricing Act,” prohibiting food retailers and third-party service providers from using personalized prices based on consumer personal data to charge higher prices for tax-exempt food. It also prohibits these entities from using protected class data to offer, advertise, or sell goods or services in ways that deny or withhold accommodations accorded to others. The bill includes exceptions for standard practices such as loyalty programs, subscription-based contracts, and pricing differences based on costs, supply, or demand.

The Data-Driven Pricing Trend: Though touted as the first enacted bill to ban data-driven pricing in the food sector, Maryland's bill is neither the first on data-driven pricing nor the first to impose sector-specific bans. In 2025, four states enacted related legislation: New York's Algorithmic Pricing Disclosure Act (disclosures for "personalized algorithmic pricing"), New York S 7882 (prohibiting residential property owners from coordinating prices through "algorithmic devices"), California SB 325 (barring the use of "common pricing algorithms" for rental price collusion), and Connecticut HB 8002 (prohibiting algorithmic rental pricing based on nonpublic competitor data). This year, two other data-driven pricing bills have crossed chamber: Colorado HB 1210 (prohibiting the use of surveillance data to set individualized prices or wages) and Hawaii HB 2458 (prohibiting retailers from using surveillance pricing in certain federal program food sales).

Criticism from Advocates and Industry Alike: As detailed in a recent New York Times article, the new law faces criticism from both the retail industry and privacy advocates. Retailers argue it's redundant, where state consumer protection law already prohibits such practices, and no "substantiated complaints" demonstrate predatory pricing patterns at grocery stores. In contrast, consumer advocates argue the bill contains significant loopholes: it exempts customer loyalty programs, which collect substantial consumer data, and prohibits only price increases (not decreases), which enables retailers to raise baseline prices while selectively discounting for targeted consumers—effectively increasing costs for most shoppers.

Hot From the Presses:

2026 Chatbot Legislation Tracker: Highlights chatbot-related legislation in 2026, including bills that have passed at least one legislative chamber. This tracker reflects a subset of FPF’s broader legislative tracking work.

Responsible Use of Personal Data in Pricing: Overviews how data is used in data-driven pricing, its regulation under existing law, and best practices.

Consumer Health AI Architecture: Discusses how the use of consumer-facing LLMs for personal health is shifting the underlying technical architecture of these systems.

Adapting Privacy to the Changing Times: Explores how privacy professionals can strategically demonstrate the value proposition of their work and team through trusted collaborations within organizations.

Third-Party Cybersecurity Risks in AI: Describes the landscape of emerging cybersecurity risks in AI-enabled supply chains and related governance.

That’s all for now, thank you for reading. See you in June!

The Algorithmic Update is a monthly newsletter highlighting key legislative, regulatory, and legal developments in privacy, AI, and tech policy. FPF members receive weekly legislative updates with deeper analysis and tracking, as well as member-exclusive resources—learn more here or contact membership@fpf.org.

Tatiana Rice is the Senior Director of Legislation at the Future of Privacy Forum (FPF).